Quick command to report on the IP addresses of all containers running on a Docker server:

docker ps | awk 'NR>1{ print $1 }' | xargs docker inspect -f '{{range .NetworkSettings.Networks}}{{$.Name}}{{" "}}{{.IPAddress}}{{end}}'

The new turkeys arrived today — last year, USPS shipping was a horrible experience. This year, I called a few hatcheries to confirm they’ve been able to delivery healthy, happy poults. Meyers said they hadn’t had delivery problems, so we ordered 20 Black Spanish turkeys from them. They shipped yesterday, the shipping notice was delivered overnight, and the USPS clerk called at 6:30 this morning to let me know they arrived. Wow, was that early!

We got all the little ones into their brooder, fed, and watered (having more healthy birds seems to help because one little guy eats or drinks and a whole flock of little ones come over and copy it).

Scott set up one of the ESP32’s that we use as environmental sensors to monitor the incubator. There’s an audible alarm if it gets too warm or cold, but that’s only useful if you’re pretty close to the unit. We had gotten a cheap rectangular incubator from Amazon — it’s got some quirks. The display says C, but if we’ve got eggs in a box that’s 100C? They’d be cooked. The number is F, but the letter “C” follows it … there’s supposed to be a calibration menu option, but there isn’t. Worse, though — the temperature sensor is off by a few degrees. If calibration was an option, that wouldn’t be a big deal … but the only way we’re able to get the device over 97F is by taping the temperature probe up to the top of the unit.

So we’ve got an DHT11 sensor inside of the incubator and the ESP32 sends temperature and humidity readings over MQTT to OpenHAB. There are text and audio alerts if the temperature or humidity aren’t in the “good” window. This means you can be out in the yard or away from home and still know if there’s a problem (since data is stored in a database table, we can even chart out the temperature or humidity across the entire incubation period).

We also bought a larger incubator for the chicken eggs — and there’s a new ESP32 and sensor in the larger incubator.

We tried a cheap forced-air incubator from Amazon.

All of these little rectangular boxes seem to have the same design flaw: the fan and heater are in one corner of the incubator, and the hot air blows out of one side of the box. So there are really hot spots in the incubator and relatively cold spots.

Since we had a bad hatch rate (1 of 8) with the thing, we decided to get a bigger, better incubator. After researching a lot of options, we got the Farm Innovators 4250 — lots of space for eggs, a centrally located heater and fan that blow air all around, and a humidity sensor (looks to be the same DHT-11 that we use in our sensor). We’ll collect eggs and get a large batch going in a few weeks.

We’ve been running the reverse osmosis system at about 95 PSI and concentrating the sap from around 2 percent to 7-8 percent. That’s a lot of water being pulled out (and the water tests at 0 … so we’re not wasting a statistically significant amount of sugar in the filtering process).

We hit our maximum connection limit on some PostgreSQL servers — which made me wonder why the hot standby servers weren’t being used … well, at all. They’re equally big, expensive servers with loads of disk space. But they’re just sitting there “in case”.

So we directed some traffic over to the standby server. I’m also going to tweak a few settings related to user limits — increase the max connections since these are dedicated hosts and have plenty of available I/O, memory, CPU, etc resources; increase the number of reserved connections since replication filled up all of the reserved slots; implement a per-user connection limit on one account that runs a lot of threads — but directing some people who were only trying to look at data over to the standby server seemed like a quick fix.

Now, we discovered something interesting about how queries against the standby interact with replication. It makes a lot of sense when you start thinking about it — if you query against the writable replica, there’s some blocking that goes on. The system isn’t going to vacuum data that you’re currently trying to use. The standby, however, doesn’t have any way to clue the writable replica in to the fact you are trying to use some data. So the writable replica gets a delete, does its thing to hide those rows from future queries, and eventually auto-vacuum comes through and cleans up those rows. All of this gets pushed over to the standby … and there goes the data you were trying to read.

Odds of this happening on a query that takes eight seconds? Incredibly low! Odds increase, however, the longer a query runs. So some of our super massive reports started seeing an error indicating that their query was cancelled “due to a conflict with recovery”

There are two solutions in the PostgreSQL documentation — one is to increase the max_standby_streaming_delay value (there’s also an archive delay, but we aren’t particularly concerned about clients querying the server during recovery operations) the other is to avoid vacuuming data too quickly — either by setting hot_standby_feedback on the standby or increasing vacuum_defer_cleanup_age on the primary.

There’s a third option too — don’t use the standby for long-running queries. That’s easily done in our case … and doesn’t require tweaking any PostgreSQL settings. Ad hoc reporting and direct user access really shouldn’t be implementing such substantial queries (it’s always good to have a SQL expert plan out and optimize complex queries if that’s an option).

Mixed it all together and bake at 350 for 10-15 minutes. Allow to cool slightly (and firm up) before eating.

About 18 months ago, I got a Cosori CP267-FD food dehydrator. I’ve used it a few times to make mushroom jerky, and a few times to preserve fresh herbs … but, in the past few weeks, we’ve started using it a lot to dehydrate fruits. Recently, we came across a great deal on pineapples at Costco — and we picked up fifteen of them! So we’ve been chopping three pineapples a day, setting the pieces on the dehydrator trays, and dehydrating them at 135F for 10-12 hours. Thicker cuts get a great chewy texture, thinner cuts get crunchy.

Postgresql stores temporary files for in-flight queries — these don’t normally hang around for long, but sorting a large amount of data or building a large hash can create a lot of temp files. A dead query that was sorting a large amount of data or …. well, we’ve gotten terabytes of temp files associated with multiple backend process IDs. The file names are algorithmic — a string “pgsql_tmp followed by the backend PID, a period, and then some other number. Thus, I can extract the PID from each file name and provide a summary of the processes associated with temp files.

To view a summary of the temp files within the pgsql_tmp folder, run the following command to print a count then a PID number:

ls /path/to/pgdata/base/pgsql_tmp | sed -nr 's/pgsql_tmp([0-9]*)\.[0-9]*/\1/p' | sort | uniq -c

A slightly longer command can be used to reverse the columns – producing a list of process IDs followed by the count of files for that PID – too:

ls /path/to/pgdata/base/pgsql_tmp | sed -nr 's/pgsql_tmp([0-9]*)\.[0-9]*/\1/p' | sort | uniq -c | sort -k2nr | awk '{printf("%s\t%s\n",$2,$1)}END{print}'

We’ve been seeing an error that prevents clients from connecting to Postgresql servers – basically that all available connections are in use and the remaining connections are reserved for superuser and replication activity.

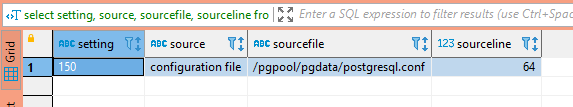

First, we need to determine what the connection limit is —

SELECT setting, source, sourcefile, sourceline FROM pg_settings WHERE name = 'max_connections';

And if there are any per-user connection limits – a limit of -1 means unlimited connections are allowed.

SELECT rolname, rolconnlimit FROM pg_roles

The next step is to identify what connections are exhausting available connections – are there a lot of long-running queries? Are there just more active queries than anticipated? Are there a bunch of idle connections?

SELECT pid, usename, client_addr, client_port ,to_char(pg_stat_activity.query_start, 'YYYY-MM-DD HH:MI:SS') as query_start , state, query FROM pg_stat_activity -- where state = 'idle' -- and usename = 'app_user' order by query_start;

In our case, there were over 100 idle connections using up about 77% of the available connections. Auto-vacuum, client read operations, and replication easily filled up the remaining available connections.

Because the clients keeping these idle connections open are an app running in a Kubernetes cluster, there’s an extra layer of complexity identifying where the connection is actually sourced. When you view the list of connections from the Postgresql server’s perspective, “client_addr” is the worker hosting the pod.

On the worker server, use conntrack to identify the actual source of the connection – the IP address in “-d” is the IP address of the Postgresql server. To isolate a specific connection, select a “client_port” from the list of connections (37900 in this case) and grep for the port. You will see the src IP of the individual POD.

lhost1750:~ # conntrack -L -f ipv4 -d 10.24.29.140 -o extended | grep 37900 ipv4 2 tcp 6 86394 ESTABLISHED src=10.244.4.80 dst=10.24.29.140 sport=37900 dport=5432 src=10.24.29.140 dst=10.24.29.155 sport=5432 dport=37900 [ASSURED] mark=0 use=1 conntrack v1.4.4 (conntrack-tools): 27 flow entries have been shown.

Then use kubeadm to identify which pod is assigned that address:

lhost1745:~ # kubectl get po --all-namespaces -o wide | grep "10.244.4.80" kstreams kafka-stream-app-deployment-1336-d8f7d7456-2n24x 2/2 Running 0 10d 10.244.4.80 lhost0.example.net <none> <none>

In this case, we’ve got an application automatically scaling up that can have 25 connections help open and idle … so there isn’t really a solution other than increasing the number of available connections to a number that’s appropriate given the number of client connections we plan on leaving open. I also want to enact a connection limit on the individual account – if there are 250 connections available on the Postgresql server, then limit the application to 200 of those connections.