Month: August 2018

Peanut butter and carob hummus

Ingredients

- 1 cup cooked garbanzo beans

- 1/4 cup peanut butter

- 1/4 cup carob powder

- 1/4 cup maple syrup

- pinch of sea salt

Method

Place everything in the bowl of a food processor and run until you’ve got a smooth paste.

To get really smooth hummus, I cook the garbanzo beans in a pressure cooker for about 35 minutes with at least 20 minute of natural depressurization.

********************************************************************************

Anya says these taste like peanut butter / chocolate / oat cookies. And it’s great for dipping anything that goes well with chocolate (strawberries, apples).

Simple Solutions

Saw an interesting note, after yet another school shooting, that said teachers eating lunch with students to maintain connection are able to identify individuals who could use intervention. Seems reasonable — I knew a few profs at Uni who ate in the student cafeteria instead of the faculty cafeteria. They were more engaged with the student body — kids would sit and chat with them, and rarely was the conversation limited to current class content.

Did you know … Sub-addressing

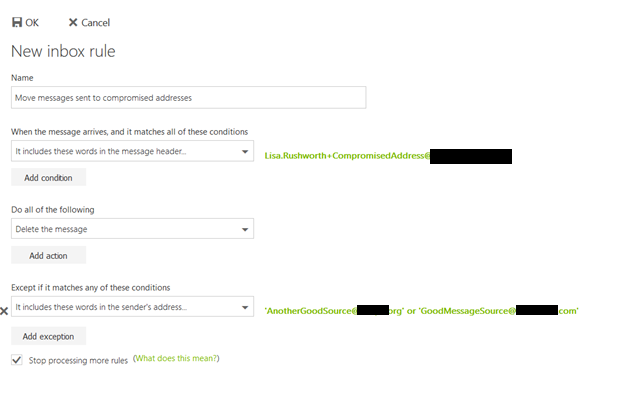

Sometimes you need to provide your company e-mail address – registering for a conference or training class, signing up for an industry newsletter. Unfortunately, this can lead to an inundation of unwanted mail.

Exchange Online supports something called “sub-addressing” (so does Gmail … and you can test your email service’s support of this feature by sending yourself a message from some other source. If it gets delivered, you’re good. If not … bummer!). Sub-addressing allows you to slightly modify your e-mail address to customize it for every situation – between your last name and the ‘@’ symbol, put a plus and then some unique text. It will look like Your.N.Ame+SomeIdentifier@domain.ccTLD instead of Your.N.Ame@domain.ccTLD.

When signing up for a Microsoft newsletter, I can tell them my e-mail address is Lisa.Rushworth+MicrosoftSecuritySlate@domain.ccTLD … and messages to that address will still be delivered to me. When I sign up for the NANPA code administration newsletter, I can tell them my e-mail address is Lisa.Rushworth+NANPACodeAdmin@domain.ccTLD.

Should you start receiving unwanted solicitations to the sub-address, you can then create a rule to delete messages sent to that address.

You can also alert the person to whom you provided the address that their contact list may have been compromised … although my luck with that hasn’t been particularly good. Most companies deny any possibility that they might be the source of disclosure. Even when the address disclosed is Me+YourCompanyNameHere@… because that is something someone randomly generated. Sigh!

Too soon

Why is it always too soon to discuss how gun control (or precluding those with mental illnesses from possessing guns) might have averted a mass shooting but it isn’t too soon to discuss how rounding up foreigners for mass deportation might have saved Mollie Tibbetts life?

Temporary Fix: ZoneMinder, PHP7.2, openHAB ZoneMinder Binding

I got Zoneminder 1.31.45 (which includes the new CakePHP framework that doesn’t use what have become reserved words in PHP7) working with the openHAB ZoneMinder binding (which relies on data from the API at /zm/api/configs/view/ATTR_NAME.json). There are two options, ZM_PATH_ZMS and ZM_OPT_FRAME_SERVER which now return bad parameter errors when attempting to retrieve the config using /view/. Looking through the database update scripts, it appears both of these parameters were removed at ZoneMinder 1.31.1

ZM_PATH_ZMS was removed from the Config database and placed in a config file, /etc/zm/conf.d/01-system-paths.conf. There is a PR to “munge” this value into the API so /viewByName returns its value … but that doesn’t expose it through /view.

ZM_OPT_FRAME_SERVER appears to have been eliminated as a configuration option.

You cannot simply re-insert the config options into the database, as ZoneMinder itself loads the ZM_PATH_ZMS value from the config file and then proceeds to use it. When it attempts to load config parameters from the Config table and encounters a duplicate … it falls over. We were unable to view our video through the ZoneMinder server.

*But* editing /usr/share/zoneminder/www/includes/config.php (exact path may vary, list the files from your package install and find the config.php in www/includes) to include an if clause around the section that loads config parameters from the database, and only loading the parameter when the Name is not ZM_PATH_ZMS (bit in yellow below) avoids this overlapping config value.

$result = $dbConn->query( 'select * from Config order by Id asc' ); if ( !$result ) echo mysql_error(); $monitors = array(); while( $row = dbFetchNext( $result ) ) { if ( $defineConsts ) // LJR 2018-08-18 I inserted this config parameter into DB to get OH2-ZM running, and need to ignore it in the ZM web code if( strcmp($row['Name'],'ZM_PATH_ZMS') != 0){ define( $row['Name'], $row['Value'] ); } $config[$row['Name']] = $row; if ( !($configCat = &$configCats[$row['Category']]) ) { $configCats[$row['Category']] = array(); $configCat = &$configCats[$row['Category']]; } $configCat[$row['Name']] = $row; }

Once the ZoneMinder web site happily ignores the presence of ZM_PATH_ZMS from the database config table, you can insert it and ZM_OPT_FRAME_SERVER (an option which appears to have been removed at ZoneMinder 1.31.1) back into the Config table. **Important** — change the actual value of ZM_PATH_ZMS to whatever is appropriate for your installation. In my ZoneMinder installation, /cgi-bin-zm is the cgi-bin directory, and /cgi-bin-zm/nph-zms is the ZMS binary.

From a MySQL command line:

use zm; #Assuming your zoneminder database is actually named zm INSERT INTO `Config` VALUES (225,'ZM_PATH_ZMS','/cgi-bin-zm/nph-zms','string','/cgi-bin-zm/nph-zms','relative/path/to/somewhere','(?^:^((?:[^/].*)?)/?$)',' $1 ','Web path to zms streaming server',' The ZoneMinder streaming server is required to send streamed images to your browser. It will be installed into the cgi-bin path given at configuration time. This option determines what the web path to the server is rather than the local path on your machine. Ordinarily the streaming server runs in parser-header mode however if you experience problems with streaming you can change this to non-parsed-header (nph) mode by changing \'zms\' to \'nph-zms\'. ','hidden',0,NULL); INSERT INTO `Config` VALUES (226,'ZM_OPT_FRAME_SERVER','0','boolean','no','yes|no','(?^i:^([yn]))',' ($1 =~ /^y/) ? \"yes\" : \"no\" ','Should analysis farm out the writing of images to disk',' In some circumstances it is possible for a slow disk to take so long writing images to disk that it causes the analysis daemon to fall behind especially during high frame rate events. Setting this option to yes enables a frame server daemon (zmf) which will be sent the images from the analysis daemon and will do the actual writing of images itself freeing up the analysis daemon to get on with other things. Should this transmission fail or other permanent or transient error occur, this function will fall back to the analysis daemon. ','system',0,NULL);

Now restart ZoneMinder and the OH2 ZoneMinder binding. We’ve got monitors on the ZoneMinder web site, we are able to view the video stream, and OH2 picks up alarms from the ZoneMinder server.

If you re-run zmupdate.pl, it will remove these two records from the Config table. If you upgrade ZoneMinder, the change to the PHP file will be reverted.

DevOps Alternatives

While many people involved in the tech industry have a wide range of experience in technologies and are interested in expanding the breadth of that knowledge, they do not have the depth of knowledge that a dedicated Unix support person, a dedicated Oracle DBA, a dedicated SAN engineer person has. How much time can a development team reasonably dedicate to expanding the depth of their developer’s knowledge? Is a developer’s time well spent troubleshooting user issues? That’s something that makes the DevOps methodology a bit confusing to me. Most developers I know … while they may complain (loudly) about unresponsive operational support teams, about poor user support troubleshooting skills … they don’t want to spend half of their day diagnosing server issues and walking users through basic how-to’s.

The DevOps methodology reminds me a lot of GTE Wireline’s desktop and server support structure. Individual verticals had their own desktop support workforce. Groups with their own desktop support engineer didn’t share a desktop support person with 1,500 other employees in the region. Their tickets didn’t sit in a queue whilst the desktop tech sorted issues for three other groups. Their desktop support tech fixed problems for their group of 100 people. This meant problems were generally resolved quickly, and some money was saved in reduced downtime. *But* it wasn’t like downtime avoidance funded the tech’s salary. The business, and the department, decided to spend money for rapid problem resolution. Some groups didn’t want to spend money on dedicated desktop support, and they relied on corporate IT. Hell, the techs employed by individual business units relied on corporate IT for escalation support. I’ve seen server support managed the same way — the call center employed techs to manage the IVR and telephony system. The IVR is malfunctioning, you don’t put a ticket in a queue with the Unix support group and wait. You get the call center technologies support person to look at it NOW. The added advantage of working closely with a specific group is that you got to know how they worked, and could recommend and customize technologies based on their specific needs. An IM platform that allowed supervisors and resource management teams to initiate messages and call center reps to respond to messages. System usage reporting to ensure individuals were online and working during their prescribed times.

Thing is, the “proof” I see offered is how quickly new code can be deployed using DevOps methodologies. Comparing time-to-market for automated builds, testing, and deployment to manual processes in no way substantiates that DevOps is a highly efficient way to go about development. It shows me that automated processes that don’t involve waiting for someone to get around to doing it are quick, efficient, and generally reduce errors. Could similar efficiency be gained by having operation teams adopt automated processes?

Thing is, there was a down-side to having the major accounts technical support team in PA employ a desktop support technician. The major accounts technical support did not have broken computers forty hours a week. But they wanted someone available from … well, I think it was like 6AM to 10PM and they employed a handful of part time techs, but point remains they paid someone to sit around and wait for a computer to break. Their techs tended to be younger people going to school for IT. One sales pitch of the position, beyond on-the-job experience was that you could use your free time to study. Company saw it as an investment – we get a loyal employee with a B.S. in IT who moved into other orgs, college kid gets some resume-building experience, a chance to network with other support teams, and a LOT of study time that the local fast food joint didn’t offer. The access design engineering department hired a desktop tech who knew the Unix-based proprietary graphic workstations they used within the group. She also maintained their access design engineering servers. She was busier than the major accounts support techs, but even with server and desktop support she had technical development time.

Within the IT org, we had desktop support people who were nearly maxed out. By design — otherwise we were paying someone to sit around and do nothing. Pretty much the same methodology that went into staffing the call center — we might only expect two calls overnight, but we’d still employ two people to staff the phones between 10P and 6A *just* so we could say we had a 24×7 tech support line. During the day? We certainly wouldn’t hire two hundred people to handle one hundred’s worth of calls. Wouldn’t operations teams be quicker to turn around requests if they were massively overstaffed?

As a pure reporting change, where you’ve got developers and operations people who just report through the same structure to ensure priorities and goals align … reasonable. Not cost effective, but it’s a valid business decision. In a way, though, DevOps as a vogue ideology is the impetus behind financial decisions (just hire more people) and methodology changes (automate it!) that would likely have similar efficacy if implemented in silo’d verticals.

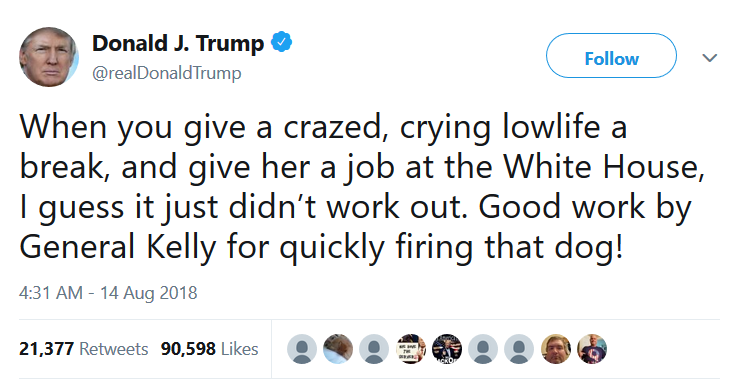

The Spoils System

How a company, political organization, or non-profit spends their money doesn’t bother me that much — it’s a factor I consider when donating to “the cause” or purchasing from a company. I can appreciate that one feels betrayed when, say, some of your University tuition is used to pay off women and pizza delivery people who have been attacked by the school’s hockey team, thus suppressing reporting and criminal charges (then bragging to prospective students about the ZERO on-campus crime rate). Or the quasi-celebrity fronted charity uses donations to settle legal disputes involving the quasi-celebrity. Or your political party offers a decent salary to someone they simultaneously claim is a terrible employee who needed to be fired. *But* those were all choices you made. And you’re free to make new choices next time around based on new information. I can even see how it would really suck to believe the Republican party has the right way of things, and you *want* to provide financial support, but you also have to accept that some percent of your contributions are going to salary for unqualified individuals who they want to keep quiet.

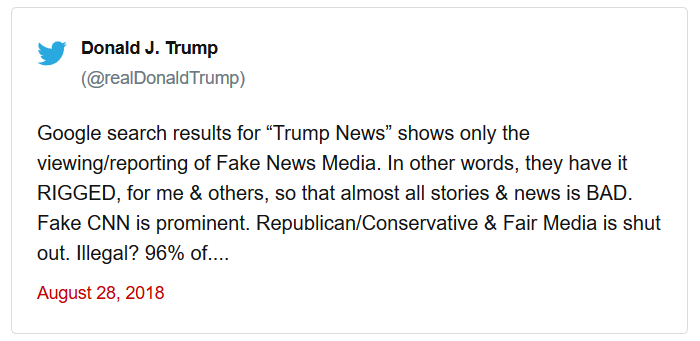

What bothers *me* is that Trump has essentially admitted he handed a 179,700$ per year job to an utterly unqualified individual because of their personal relationship.

*I* didn’t chose the grifter-in-chief, but it’s still my tax money going to his friends (at that, to his “racist dude’s obligatory non-white, I cannot be a racist because Bob’s my friend” friend … although there are *whole binders full* of unqualified old white dudes grifting in Trump’s administration too.)

Our tax money going to political supporters who are unqualified for the job? That’s why we’ve got the Pendleton Act and the subsequent civil service system — every politician could find somewhere for their friends and supporters to suck up taxpayer money for a few years. And the inverse: a politician could garner key supporters by offering them cushy government positions. And an unlucky politician could garner a supporter who considered himself key, anger the chap by not giving him one of those cushy jobs, and get shot. Maybe it’s time to eliminate the remaining “at the pleasure of the president” job appointments.

openHAB With Custom Built Serial Binding – fix to locking permission issue

When we updated our openHAB server to Fedora 28 and changed to a non-root user, the openhab user was unable to create lock files in /run/lock. As an interim fix, we just changed the permission on the lock folder to allow the openhab account to create files. As a more elegant solution, I’ve built the nrjavaserial JAR file from the source in NeuronRobotics’ repository.

The process to build and use a JAR built from this source follows. Before attempting to build the nrjavaserial jar from source, ensure you have gradle (which will install a LOT of additional packages), lockdev, lockdev-devel, some jdk, and some jdk-devel (I used java-1.8.0-openjdk-1.8.0.181-7.b13.fc28.x86_64 and java-1.8.0-openjdk-devel-1.8.0.181-7.b13.fc28.x86_64 because they were already installed for other projects).

# Set ossrhUsername and ossrhPassword values for the account used to build the project – username and password can be null

[lisa@server ~]# cat ~/.gradle/gradle.properties

ossrhUsername=

ossrhPassword=

# Grab the source

[lisa@server ~]# git clone https://github.com/NeuronRobotics/nrjavaserial.git

# Build the project

[lisa@server ~]# cd nrjavaserial

[lisa@server nrjavaserial]# make linux64 # assuming you’ve got 64-bit linux

# Voila, a jar file

[lisa@server nrjavaserial]# cd build/libs

[lisa@server libs]# ll

total 852

-rw-r–r– 1 root root 611694 Aug 16 10:08 nrjavaserial-3.14.0.jar

-rw-r–r– 1 root root 170546 Aug 16 10:08 nrjavaserial-3.14.0-javadoc.jar

-rw-r–r– 1 root root 85833 Aug 16 10:08 nrjavaserial-3.14.0-sources.jar

Before installing the newly built nrjavaserial-3.14.0.jar into openHAB, ensure you have lockdev installed on your Fedora machine and add your openhab user account to the lock group.

# Verify the lockdev folder was created

[lisa@server ~]# ll /run/lock/

total 4

-rw-r–r– 1 root root 22 Aug 10 15:35 asound.state.lock

drwx—— 2 root root 60 Aug 10 15:30 iscsi

drwxrwxr-x 2 root lock 140 Aug 16 12:19 lockdev

drwx—— 2 root root 40 Aug 10 15:30 lvm

drwxr-xr-x 2 root root 40 Aug 10 15:30 ppp

drwxr-xr-x 2 root root 40 Aug 10 15:30 subsys

# Add the openhab user to the lock group

[lisa@server ~]# usermod -a -G lock openhab

The openhab user account can now write to the /run/lock/lockdev folder. Install the new jar file into openHAB. When you restart openHAB, verify lock files are created as expected.

[lisa@server ~]# ll /run/lock/lockdev/

total 20

-rw-rw-r– 5 openhab openhab 11 Aug 16 12:19 LCK…31525

-rw-rw-r– 5 openhab openhab 11 Aug 16 12:19 LCK..ttyUSB-5

-rw-rw-r– 5 openhab openhab 11 Aug 16 12:19 LCK..ttyUSB-55

-rw-rw-r– 5 openhab openhab 11 Aug 16 12:19 LK.000.188.000

-rw-rw-r– 5 openhab openhab 11 Aug 16 12:19 LK.000.188.001

Zoneminder Snapshot With openHAB Binding

When we upgraded to Fedora 28 on our server, ZoneMinder ceased working because some CakePHP function names could no longer be used. To resolve the issue, I ended up running a snapshot build of ZoneMinder that included a newer build of CakePHP. Version 1.31.45 instead of 1.30.4-7 on the repository.

All of our cameras showed up, and although the ZoneMinder folks seem to have a bug in their SQL query when building out the table of event counts on the main page (that is, all of my monitors have blank instead of event counts and my apache log is filled with

[Wed Aug 15 12:08:37.152933 2018] [php7:notice] [pid 32496] [client 10.5.5.234:14705] ERR [SQL-ERR 'SQLSTATE[42000]: Syntax error or access violation: 1064 You have an error in your SQL syntax; check the manual that corresponds to your MariaDB server version for the right syntax to use near 'and E.MonitorId = '13' ),1,NULL)) as EventCount1, count(if(1 and ( and E.Monito' at line 1', statement was 'select count(if(1 and ( E.MonitorId = '13' ),1,NULL)) as EventCount0, count(if(1 and ( and E.MonitorId = '13' ),1,NULL)) as EventCount1, count(if(1 and ( and E.MonitorId = '13' ),1,NULL)) as EventCount2, count(if(1 and ( and E.MonitorId = '13' ),1,NULL)) as EventCount3, count(if(1 and ( and E.MonitorId = '13' ),1,NULL)) as EventCount4, count(if(1 and ( and E.MonitorId = '13' ),1,NULL)) as EventCount5 from Events as E where MonitorId = ?' params:13]

… it works.

Until Scott checked openHAB, where all of the items are offline. Apparently the openHAB ZoneMinder binding is using the cgi-bin stuff to get the value of ZM_PATH_ZMS. A config option which was removed from the database as part of the upgrade process.

Upgrading database to version 1.31.1 Loading config from DBNo option 'ZM_DIR_EVENTS' found, removing. No option 'ZM_DIR_IMAGES' found, removing. No option 'ZM_DIR_SOUNDS' found, removing. No option 'ZM_FRAME_SOCKET_SIZE' found, removing. No option 'ZM_OPT_FRAME_SERVER' found, removing. No option 'ZM_PATH_ARP' found, removing. No option 'ZM_PATH_LOGS' found, removing. No option 'ZM_PATH_MAP' found, removing. No option 'ZM_PATH_SOCKS' found, removing. No option 'ZM_PATH_SWAP' found, removing. No option 'ZM_PATH_ZMS' found, removing. 207 entries Saving config to DB 207 entries Upgrading DB to 1.30.4 from 1.30.3

The calls from openHAB yield 404 errors in the access_log

10.0.0.5 - - [15/Aug/2018:09:38:04 -0400] "GET /zm/api/configs/view/ZM_PATH_ZMS.json HTTP/1.1" 404 1751 "-" "Jetty/9.3.21.v20170918"

Unfortunately they’ve changed the URL to get these values — it’s “munged” from the config file as the parameters are no longer stored to the Config table.

http://zoneminder.domain.ccTLD/zm/api/configs/view/ZM_PATH_ZMS.json

is now

http://zoneminder.domain.ccTLD/zm/api/configs/viewByName/ZM_PATH_ZMS.json

So … that’s a problem!