This information relates to the custom CA policy module implementation at https://github.com/ljr55555/CustomEkuPolicy

Values Used in this Implementation

The following GUID values are used within the code

LIBID CustomEkuPolicyModule 71e54225-3720-45d5-a181-1ce854b74c58

CLSID CustomEkuPolicyModule bea77360-4ed0-469c-a888-7b5cac3b8776

Project GUID 98d72278-b7ab-4f6b-817c-55dac0796675

Documentation is written while adding the custom module to a CA named UG-IssuingCA-02

Code Overview

CustomEkuPolicy.dll is a custom AD CS policy module implemented as a native ATL COM DLL. It is designed to work as a wrapper around Microsoft’s default AD CS policy module rather than replacing that behavior entirely.

Purpose

The DLL adds a controlled enhancement to certificate issuance:

- preserve normal Microsoft CA policy behavior

- preserve compatibility with Venafi/MS CA workflows

- inject Server Authentication and Client Authentication EKUs only when appropriate

High-level behavior

When the CA processes a certificate request, the module does the following:

- Loads and delegates to the Microsoft default policy module:

- CertificateAuthority_MicrosoftDefault.Policy

- Lets the default policy module evaluate the request normally:

- issuance

- pending/deny decisions

- standard AD CS behavior

- If the default policy module decides to issue immediately:

- inspect the request/certificate context

- determine whether the certificate is eligible for EKU injection

- Inject the following EKUs only when all conditions are met:

- Server Authentication: 1.3.6.1.5.5.7.3.1

- Client Authentication: 1.3.6.1.5.5.7.3.2

Injection conditions

EKU injection occurs only if:

- the default Microsoft policy module returned issue now

- the certificate is not a CA certificate

- the certificate’s template/type name is allowed

- the certificate does not already contain an EKU extension

If any of those conditions are not met, the request is left unchanged.

Why the wrapper design is used

Earlier testing showed that completely replacing Microsoft’s default policy logic caused incompatibility with Venafi.

To avoid that, this module wraps the default policy module and preserves its normal behavior first, then applies the EKU enhancement afterward.

This design is what allows:

- successful issuance through Venafi

- retention of normal CA behavior

- selective EKU injection

Template/type filtering

The module supports a registry-driven allow-list of certificate template/type names.

Registry path:

HKLM\SYSTEM\CurrentControlSet\Services\CertSvc\Configuration\<CAName>\PolicyModules\CustomEkuPolicy.Module

Registry value:

AllowedTemplateNames

Type:

REG_MULTI_SZ

If the registry value is missing or empty, the module defaults to allowing only:

WebServer

This prevents the module from broadly injecting TLS EKUs into unrelated certificate types such as code-signing or other special-purpose leaf certificates.

Important implementation details

- Implemented as ICertPolicy2

- Built as an in-process COM DLL loaded by certsrv.exe

- Uses ATL for COM plumbing

- Uses CryptoAPI to ASN.1-encode the EKU extension

- Supports binary extension values returned by AD CS as either:

- VT_BSTR

- VT_ARRAY | VT_UI1

Failure behavior

If EKU injection fails:

- the module logs the failure

- the default Microsoft issuance decision is preserved

This means the module is effectively fail-open with respect to EKU injection, to avoid breaking certificate issuance workflows.

Result

In normal operation, certificates of the allowed type that do not already contain EKUs are issued with:

- Server Authentication

- Client Authentication

Other certificate types are left unchanged.

Function Reference Table

| Function |

Location |

Called from |

Calls |

Purpose |

| DllMain |

dllmain.cpp |

Windows loader |

_AtlModule.DllMain |

Standard DLL entry point for process/thread attach and detach. |

| DllCanUnloadNow |

dllmain.cpp |

COM runtime |

_AtlModule.DllCanUnloadNow |

Standard COM export used to determine whether the DLL can be unloaded. |

| DllGetClassObject |

dllmain.cpp |

COM runtime |

_AtlModule.DllGetClassObject |

Standard COM export that returns the class factory for CCustomEkuPolicyModule. |

| DllRegisterServer |

dllmain.cpp |

COM registration tools / COM runtime if invoked |

_AtlModule.DllRegisterServer |

Standard COM registration export. Manual registry registration is used operationally instead. |

| DllUnregisterServer |

dllmain.cpp |

COM registration tools / COM runtime if invoked |

_AtlModule.DllUnregisterServer |

Standard COM unregistration export. |

| GetTypeInfoCount |

CustomEkuPolicyModule.cpp |

COM/IDispatch clients if they query dispatch metadata |

none |

Minimal IDispatch stub implementation. Returns no type information. |

| GetTypeInfo |

CustomEkuPolicyModule.cpp |

COM/IDispatch clients |

none |

Minimal IDispatch stub implementation. Not implemented for this module. |

| GetIDsOfNames |

CustomEkuPolicyModule.cpp |

COM/IDispatch clients |

none |

Minimal IDispatch stub implementation. Not used by AD CS in this design. |

| Invoke |

CustomEkuPolicyModule.cpp |

COM/IDispatch clients |

none |

Minimal IDispatch stub implementation. Not used by AD CS in this design. |

| Initialize |

CustomEkuPolicyModule.cpp |

AD CS when the policy module is initialized |

EnsureDefaultPolicyLoaded, LoadConfiguration, m_spDefaultPolicy->Initialize |

Stores the CA config string, loads registry-based configuration, loads the Microsoft default policy module, and delegates initialization to the default module. |

| VerifyRequest |

CustomEkuPolicyModule.cpp |

AD CS for each certificate request |

EnsureDefaultPolicyLoaded, m_spDefaultPolicy->VerifyRequest, TryInjectDefaultEku, LogHr |

Main policy processing function. Delegates request handling to the Microsoft default policy module and, if the request is immediately issued, attempts EKU injection. |

| GetDescription |

CustomEkuPolicyModule.cpp |

AD CS / administrative interfaces |

none |

Returns a human-readable description of the policy module. |

| ShutDown |

CustomEkuPolicyModule.cpp |

AD CS during service shutdown / module unload |

m_spDefaultPolicy->ShutDown |

Delegates shutdown to the default policy module. |

| GetManageModule |

CustomEkuPolicyModule.cpp |

AD CS / administrative interfaces |

m_spDefaultPolicy2->GetManageModule |

Pass-through to the default policy module’s ICertPolicy2::GetManageModule() when available. |

| EnsureDefaultPolicyLoaded |

CustomEkuPolicyModule.cpp |

Initialize, VerifyRequest |

CLSIDFromProgID, CoCreateInstance, QueryInterface |

Loads the Microsoft default policy module (CertificateAuthority_MicrosoftDefault.Policy) and caches interface pointers. |

| LoadConfiguration |

CustomEkuPolicyModule.cpp |

Initialize |

LoadAllowedTemplateNamesFromRegistry |

Loads custom registry-driven configuration for the wrapper module. |

| LoadAllowedTemplateNamesFromRegistry |

CustomEkuPolicyModule.cpp |

LoadConfiguration |

RegOpenKeyExW, RegQueryValueExW, RegCloseKey, LogInfo |

Reads AllowedTemplateNames from the CA policy module registry key and populates the internal allow-list. Falls back to WebServer if not configured. |

| TryInjectDefaultEku |

CustomEkuPolicyModule.cpp |

VerifyRequest |

CoCreateInstance, SetContext, IsCaRequest, GetTemplateName, IsTemplateAllowed, IsExtensionPresent, BuildDefaultEkuEncoded, SetExtensionBytes, LogInfo, LogHr |

Performs the conditional EKU injection logic after default policy approval. |

| GetExtensionBytes |

CustomEkuPolicyModule.cpp |

IsExtensionPresent, IsCaRequest, GetTemplateName |

ICertServerPolicy::GetCertificateExtension, ICertServerPolicy::GetCertificateExtensionFlags, VariantClear |

Reads a certificate/request extension value from ICertServerPolicy as binary. |

| SetExtensionBytes |

CustomEkuPolicyModule.cpp |

TryInjectDefaultEku |

SysAllocStringByteLen, ICertServerPolicy::SetCertificateExtension, VariantClear |

Writes a binary certificate/request extension value back through ICertServerPolicy. Used to inject EKU. |

| IsExtensionPresent |

CustomEkuPolicyModule.cpp |

TryInjectDefaultEku |

GetExtensionBytes, VariantClear |

Checks whether a specific extension OID already exists in the current request/certificate context. |

| BuildDefaultEkuEncoded |

CustomEkuPolicyModule.cpp |

TryInjectDefaultEku |

CryptEncodeObjectEx |

Builds and ASN.1-encodes the default EKU extension containing Server Auth and Client Auth OIDs. |

| IsCaRequest |

CustomEkuPolicyModule.cpp |

TryInjectDefaultEku |

GetExtensionBytes, VariantBinaryToBytes, CryptDecodeObjectEx, VariantClear, LocalFree |

Reads and decodes Basic Constraints to determine whether the request is for a CA certificate. |

| VariantBinaryToBytes |

CustomEkuPolicyModule.cpp |

IsCaRequest, GetTemplateName |

SysStringByteLen, SafeArrayGetLBound, SafeArrayGetUBound, SafeArrayAccessData, SafeArrayUnaccessData |

Normalizes binary values returned from AD CS (VT_BSTR or `VT_ARRAY |

| GetTemplateName |

CustomEkuPolicyModule.cpp |

TryInjectDefaultEku |

GetExtensionBytes, VariantBinaryToBytes, CryptDecodeObjectEx, VariantClear, LocalFree |

Reads and decodes the certificate template/type name extension (1.3.6.1.4.1.311.20.2). |

| IsTemplateAllowed |

CustomEkuPolicyModule.cpp |

TryInjectDefaultEku |

EqualsIgnoreCase |

Checks whether the decoded template/type name matches one of the allowed names from configuration. |

| EqualsIgnoreCase |

CustomEkuPolicyModule.cpp |

IsTemplateAllowed |

towlower |

Case-insensitive string comparison helper for template/type matching. |

| LogEventWord |

CustomEkuPolicyModule.cpp |

LogInfo, LogHr |

RegisterEventSourceW, ReportEventW, DeregisterEventSource |

Low-level Windows Event Log writer for the CustomEkuPolicy source. |

| LogInfo |

CustomEkuPolicyModule.cpp |

Multiple internal functions |

LogEventWord |

Convenience wrapper for informational event logging. |

| LogHr |

CustomEkuPolicyModule.cpp |

Multiple internal functions |

StringCchPrintfW, LogEventWord |

Convenience wrapper for logging HRESULT failures to the Windows Event Log. |

Code Flow

This section describes how the main code paths in CustomEkuPolicy.dll work.

1. DLL load and COM registration

The DLL is an ATL COM in-process server.

Key file:

Key responsibilities:

- exports standard COM entry points:

- DllMain

- DllCanUnloadNow

- DllGetClassObject

- DllRegisterServer

- DllUnregisterServer

- exposes the COM class:

The class is registered in the ATL object map with:

OBJECT_ENTRY_AUTO(CLSID_CustomEkuPolicyModule, CCustomEkuPolicyModule)

This is what allows AD CS to instantiate the policy module through COM.

2. AD CS loads the policy module

When Certificate Services starts, AD CS reads the configured active policy module from the registry and creates the COM object.

Main class:

Implemented interface:

This class is the entry point for all CA policy decisions handled by the DLL.

3. Initialize()

Function:

- CCustomEkuPolicyModule::Initialize(BSTR strConfig)

Purpose:

- store the CA configuration string

- load configuration from registry

- load the Microsoft default policy module

- delegate initialization to the default module

Flow:

- Save strConfig

- Call EnsureDefaultPolicyLoaded()

- Call LoadConfiguration()

- Call the default module’s Initialize()

This ensures the wrapper is fully initialized before the CA begins handling requests.

4. EnsureDefaultPolicyLoaded()

Function:

- EnsureDefaultPolicyLoaded()

Purpose:

- create an instance of the Microsoft default policy module

How it works:

- Resolve the ProgID:

CertificateAuthority_MicrosoftDefault.Policy

- Convert it to a CLSID using CLSIDFromProgID

- Create the COM object with CoCreateInstance

- Store:

- ICertPolicy

- optionally ICertPolicy2

Why this matters:

- This is the core wrapper behavior

- Instead of replacing Microsoft policy behavior, the module delegates to it first

5. LoadConfiguration()

Function:

Purpose:

- load custom module behavior from registry

Currently this loads:

Supporting function:

- LoadAllowedTemplateNamesFromRegistry()

Flow:

- Read the CA name from strConfig

- Build registry path:

HKLM\SYSTEM\CurrentControlSet\Services\CertSvc\Configuration\<CAName>\PolicyModules\CustomEkuPolicy.Module

- Read AllowedTemplateNames as REG_MULTI_SZ

- Store values in:

Fallback behavior:

- if the value is missing or unusable, default to:

This controls which certificate types are eligible for EKU injection.

6. VerifyRequest()

Function:

- CCustomEkuPolicyModule::VerifyRequest(…)

This is the most important function in the module.

Purpose:

- let Microsoft default policy evaluate the request

- preserve its result

- attempt EKU injection only after successful default issuance

Flow:

- Call EnsureDefaultPolicyLoaded()

- Call the default module’s:

m_spDefaultPolicy->VerifyRequest(…)

- If the default module fails:

- return the failure unchanged

- If the default module returns a disposition other than:

VR_INSTANT_OK

then exit without modification

- If the request is being issued immediately:

- call TryInjectDefaultEku(Context)

- If EKU injection fails:

- log the failure

- preserve the default issue decision

- Return success

7. TryInjectDefaultEku()

Function:

- TryInjectDefaultEku(LONG Context)

Purpose:

- inspect the certificate request/certificate context

- determine whether EKU injection should happen

- add the EKU extension if appropriate

Flow:

- Create ICertServerPolicy

- Call SetContext(Context)

- Determine whether the request is a CA certificate:

- If CA cert:

- Read certificate type/template name:

- Check whether template/type is allowed:

- If not allowed:

- Check whether EKU already exists:

- IsExtensionPresent(“2.5.29.37”)

- If EKU already exists:

- Build the encoded EKU extension:

- Write the extension:

- SetExtensionBytes(“2.5.29.37”, …)

Only if all of those conditions succeed does the module inject the EKU.

8. IsCaRequest()

Function:

- IsCaRequest(ICertServerPolicy* pServer, bool& isCa)

Purpose:

- prevent EKU injection on CA certificates

How it works:

- Read Basic Constraints extension:

- Decode it with CryptoAPI

- Check fCA

- Set isCa = true if CA certificate

This protects subordinate CA and CA certificate requests from receiving inappropriate TLS EKUs.

9. GetTemplateName()

Function:

- GetTemplateName(ICertServerPolicy* pServer, std::wstring& templateName)

Purpose:

- identify the certificate type/template name

How it works:

- Read extension:

- Convert returned binary value into bytes

- Decode the string value

- Return the template/type name

Example expected values:

This is the main filter used to decide whether EKU injection is allowed.

10. IsTemplateAllowed()

Function:

- IsTemplateAllowed(const std::wstring& templateName) const

Purpose:

- compare the certificate type name against the configured allow-list

How it works:

- case-insensitive comparison against m_allowedTemplateNames

If the template/type is not in the allow-list, the request is left unchanged.

11. IsExtensionPresent()

Function:

- IsExtensionPresent(ICertServerPolicy* pServer, LPCWSTR wszOid, bool& present)

Purpose:

- determine whether a specific extension already exists

Used for:

- checking whether 2.5.29.37 Enhanced Key Usage is already present

If it is already present, the module does not modify it.

This preserves explicitly requested EKUs.

12. BuildDefaultEkuEncoded()

Function:

- BuildDefaultEkuEncoded(BYTE** ppbEncoded, DWORD* pcbEncoded)

Purpose:

- ASN.1-encode the default EKU list

EKUs encoded:

- Server Authentication

- Client Authentication

How it works:

- Build CERT_ENHKEY_USAGE

- Encode it using:

- Return the encoded blob

This produces the value written into extension 2.5.29.37.

13. SetExtensionBytes()

Function:

- SetExtensionBytes(ICertServerPolicy* pServer, LPCWSTR wszOid, const BYTE* pbData, DWORD cbData, LONG extFlags)

Purpose:

- write a binary certificate extension back into the current request/certificate context

How it works:

- Convert the encoded bytes into a binary BSTR

- Wrap in a VARIANT

- Call:

- ICertServerPolicy::SetCertificateExtension(…)

This is the actual point where EKU injection occurs.

14. VariantBinaryToBytes()

Function:

- VariantBinaryToBytes(const VARIANT& var, std::vector<BYTE>& bytes)

Purpose:

- normalize AD CS binary extension values into a byte vector

Why it exists:

- AD CS may return binary property values as either:

- VT_BSTR

- VT_ARRAY | VT_UI1

This helper makes downstream decoding logic reliable and avoids earlier failures caused by assuming only one format.

15. Logging

Functions:

- LogEventWord()

- LogInfo()

- LogHr()

Purpose:

- write operational/debug events to the Windows Application log

Used for:

- initialization failures

- EKU injection failures

- important decision points

This was added mainly to make runtime behavior observable and troubleshoot CA/AD CS integration.

16. Failure model

The wrapper preserves Microsoft default behavior wherever possible.

Important principle:

- default policy decision comes first

- EKU injection is best effort

- if EKU injection fails, issuance is not blocked unless the default policy blocked it

This makes the module effectively fail-open for EKU injection, while still preserving standard CA behavior.

Summary of end-to-end request flow

In simplified form, the code path is:

- AD CS loads CustomEkuPolicy.dll

- Initialize() loads the Microsoft default policy module and configuration

- On each request, VerifyRequest() calls the Microsoft default policy module

- If Microsoft policy chooses immediate issuance:

- inspect request

- check CA/not-CA

- check template/type allow-list

- check existing EKU

- inject Server Auth + Client Auth EKU if eligible

- Return the Microsoft default issuance result

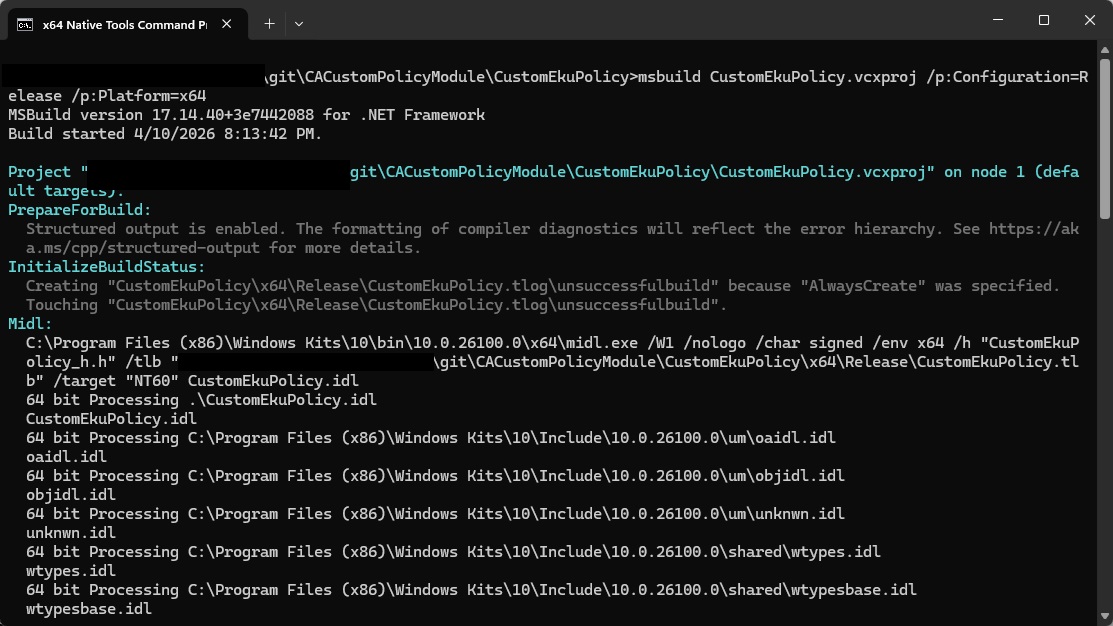

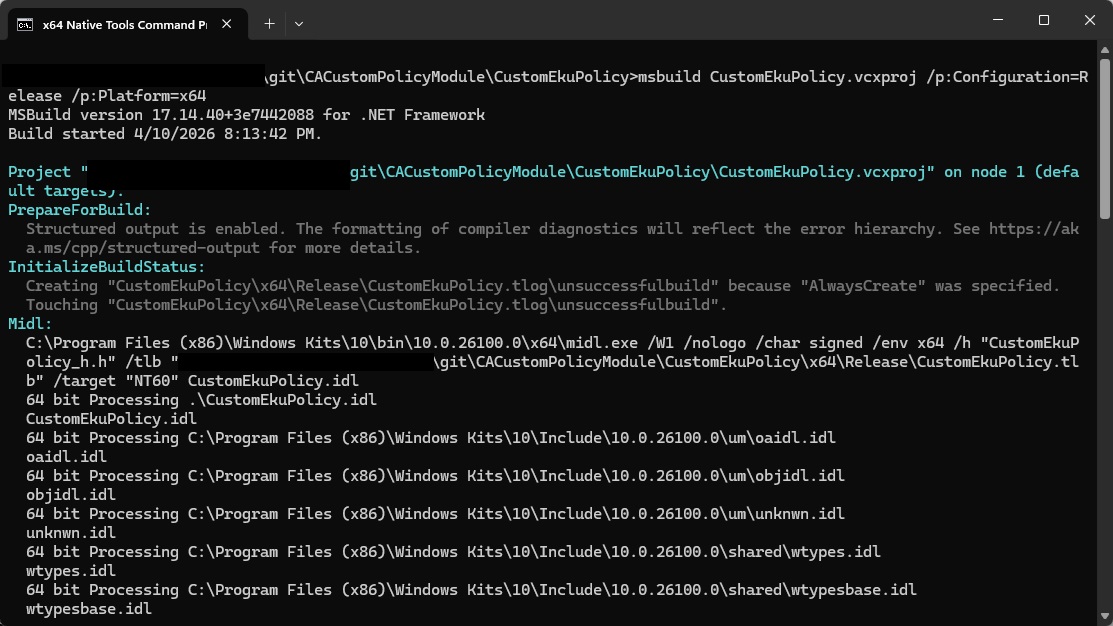

Build

Install Build Tools for Visual Studio 2022 with the Desktop development with C++ workload, including the MSVC v143 toolset, Windows SDK, and ATL support.

Build the project from the x64 Native Tools Command Prompt for VS 2022 using msbuild CustomEkuPolicy.vcxproj /p:Configuration=Release /p:Platform=x64

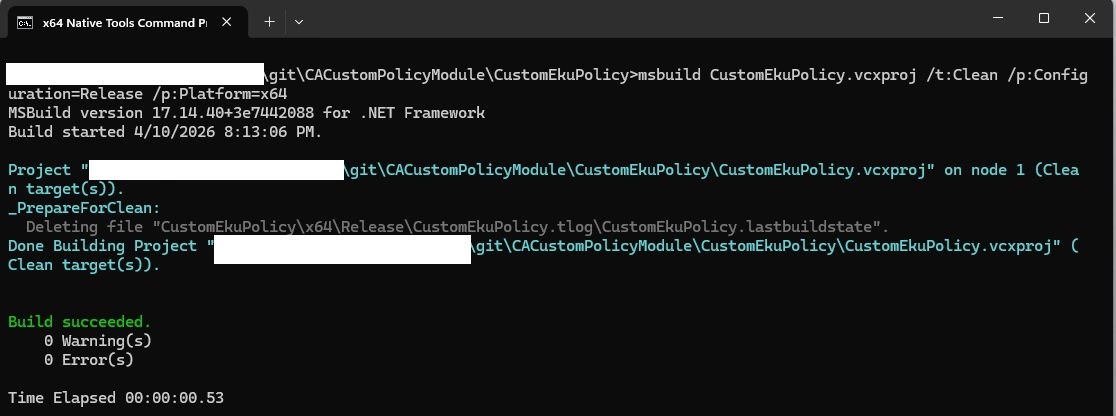

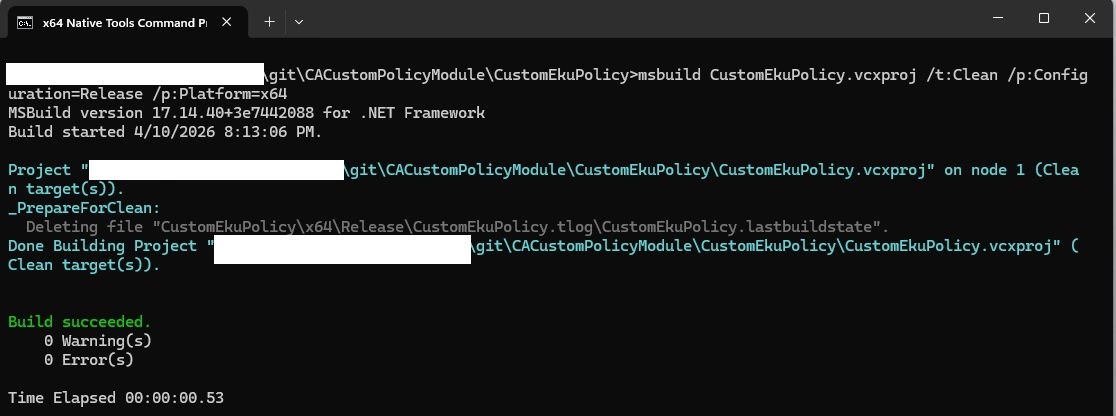

The equivalent of make clean is

msbuild CustomEkuPolicy.vcxproj /t:Clean /p:Configuration=Release /p:Platform=x64

Installation

Copy the file into a location on the CA server. I am using a path under c:\program files\EKUPolicy\CustomEkuPolicy

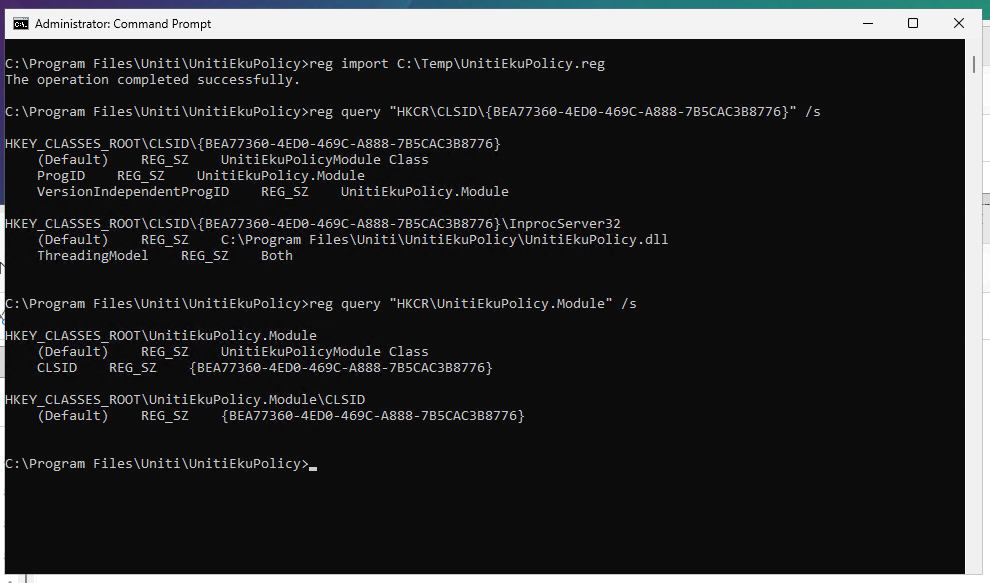

Create a REG file to import the COM registration

Windows Registry Editor Version 5.00

[HKEY_CLASSES_ROOT\CLSID\{BEA77360-4ED0-469C-A888-7B5CAC3B8776}]

@=”CustomEkuPolicyModule Class”

“ProgID”=”CustomEkuPolicy.Module”

“VersionIndependentProgID”=”CustomEkuPolicy.Module”

[HKEY_CLASSES_ROOT\CLSID\{BEA77360-4ED0-469C-A888-7B5CAC3B8776}\InprocServer32]

@=”C:\\Program Files\\EKUPolicy\\CustomEkuPolicy\\CustomEkuPolicy.dll”

“ThreadingModel”=”Both”

[HKEY_CLASSES_ROOT\CustomEkuPolicy.Module]

@=”CustomEkuPolicyModule Class”

“CLSID”=”{BEA77360-4ED0-469C-A888-7B5CAC3B8776}”

[HKEY_CLASSES_ROOT\CustomEkuPolicy.Module\CLSID]

@=”{BEA77360-4ED0-469C-A888-7B5CAC3B8776}”

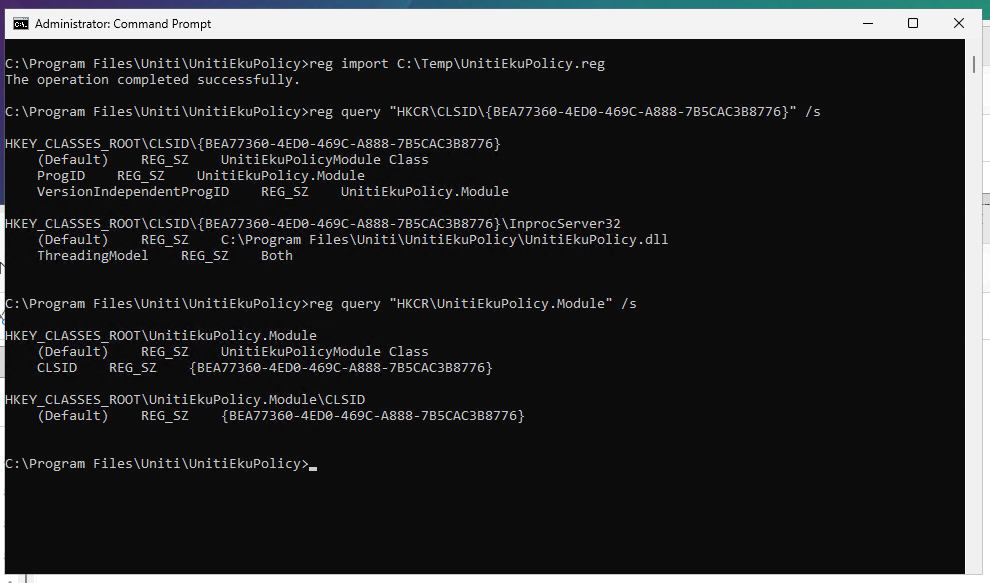

In an elevated command prompt, import the reg file and verify the values are present

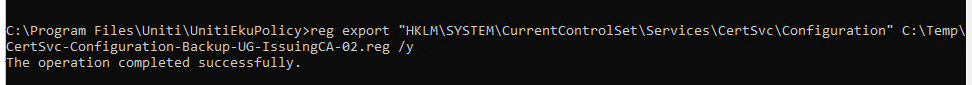

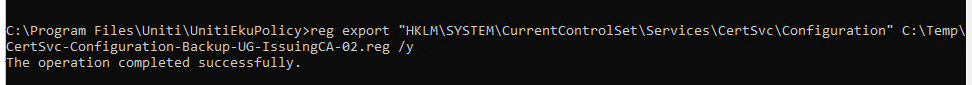

Back up the current registry settings

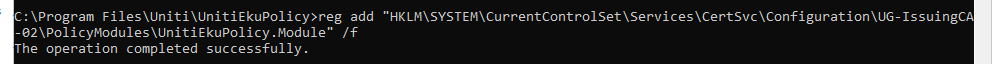

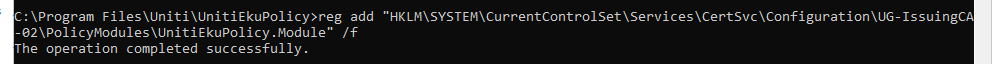

Add key for custom module

reg add “HKLM\SYSTEM\CurrentControlSet\Services\CertSvc\Configuration\<CAName>\PolicyModules\CustomEkuPolicy.Module” /f

Add WebServer to the registry key for the custom policy module unless you want to rely on the DLL defaults

reg add “HKLM\SYSTEM\CurrentControlSet\Services\CertSvc\Configuration\<CANAME>\PolicyModules\CustomEkuPolicy.Module” /v AllowedTemplateNames /t REG_MULTI_SZ /d “WebServer\0” /f

And activate the custom module:

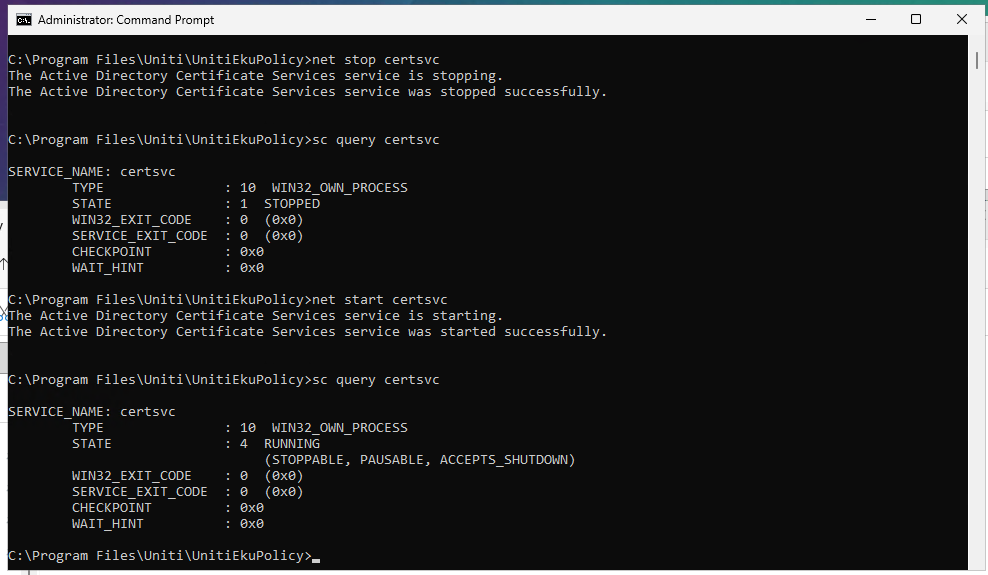

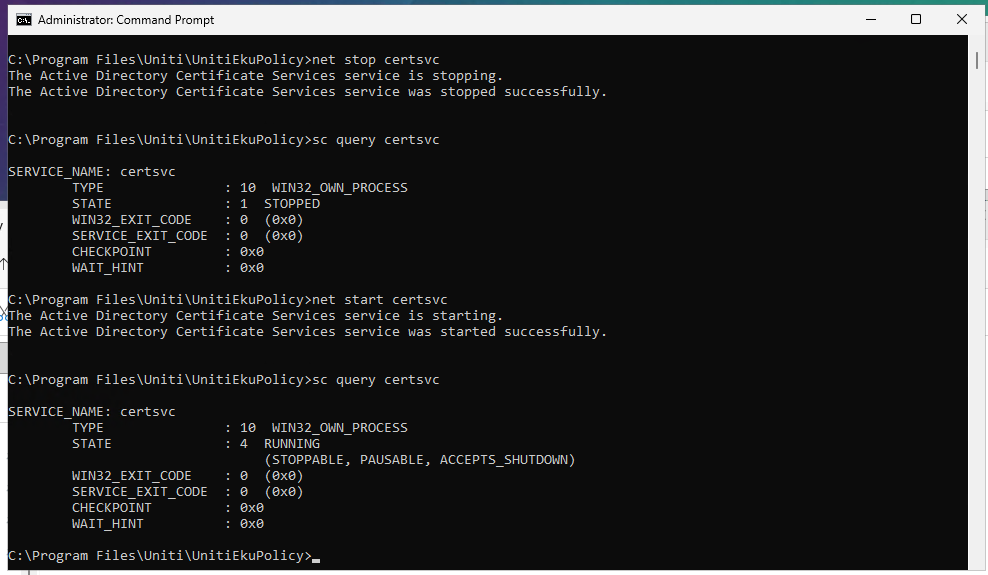

Restart the certificate server service