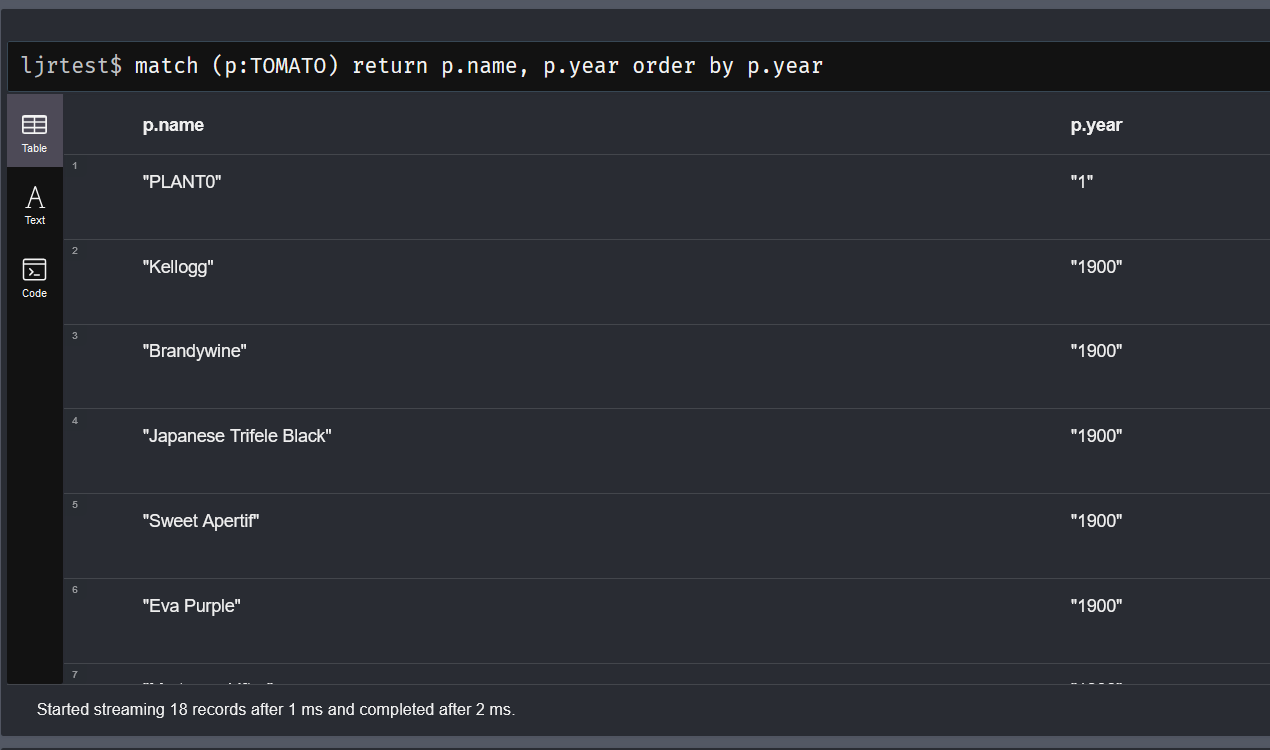

I’ve been playing around with loading neo4j data from random tables on the web using apoc.load.html from the extended APOC library. The first trick to it is knowing how to use jquery to find elements of a webpage — the table named “listtable” then the path down to the data elements (tbody tr td) and column numbers.

Once you have extracted the data, you can then manipulate it, map it into fields, create relationships, etc.

UNWIND is used as a “for each” loop that allows us to iterate through the result set.

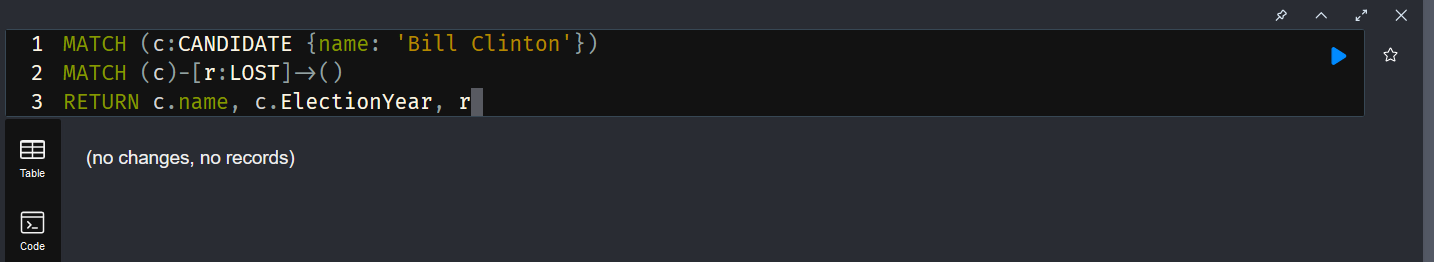

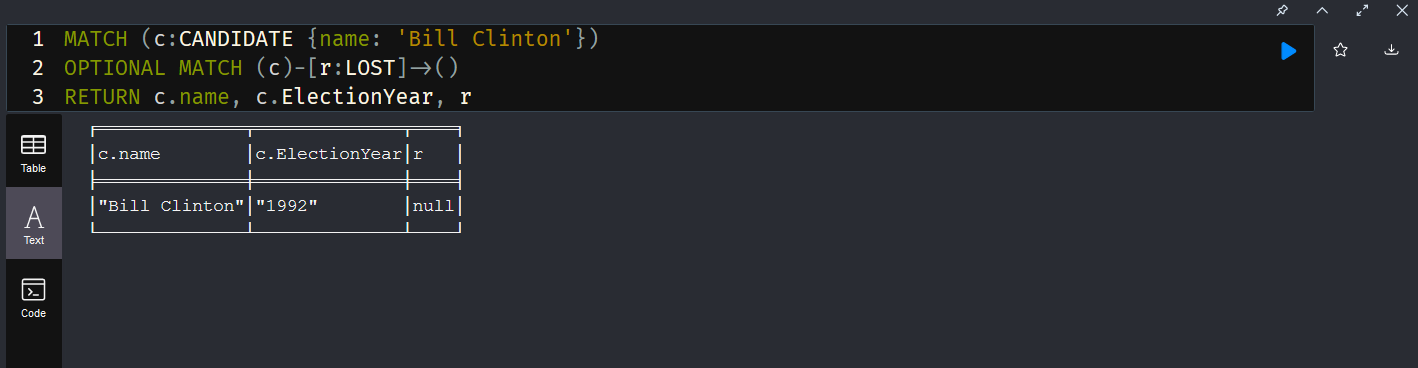

MERGE creates or updates records (which, in this case, means I have a poor data model … someone could well have run in multiple elections and I am not really accommodating those cases well. Since I don’t actually want a database of presidential elections but was really just testing some new-to-me functionality … we’re going to ignore these logic problems)

SET adds (or updates) properties of the node.

CALL apoc.load.html("https://www.iweblists.com/us/government/PresidentialElectionResults.html",

{electionyear: "#listtable tbody tr td:eq(0)"

, winner: "#listtable tbody tr td:eq(1)"

, loser: "#listtable tbody tr td:eq(2)"

, electoral_win: "#listtable tbody tr td:eq(3)"

, electoral_lose: "#listtable tbody tr td:eq(4)"

, popular_win: "#listtable tbody tr td:eq(5)"

, popular_delta: "#listtable tbody tr td:eq(6)" }) yield value

WITH value, size(value.electionyear) as rangeup

UNWIND range(0,rangeup) as i WITH value.electionyear[i].text as ElectionYear

, value.winner[i].text as Winner

, value.loser[i].text as Loser

, value.electoral_win[i].text as EC_Winner

, value.electoral_lose[i].text as EC_Loser

, value.popular_win[i].text as Pop_Vote_Winner

, value.popular_delta[i].text as Pop_Vote_Delta

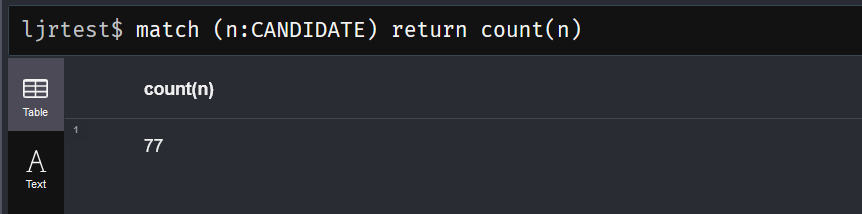

MERGE (ew:Candidate {name: coalesce(Winner,"Unknown")})

MERGE (el:Record {name: coalesce(Loser,"Unknown")})

SET ew.EC_Votes = coalesce(EC_Winner,"Unknown")

SET el.EC_Votes = coalesce(EC_Loser,"Unknown")

SET ew.Year = ElectionYear

SET el.Year = ElectionYear

WITH *, replace(Pop_Vote_Delta,",","") as Pop_Vote_Delta_Int, replace(Pop_Vote_Winner,",","") as Pop_Winner_Int

SET ew.Pop_Votes = Pop_Winner_Int

SET el.Pop_Votes = apoc.number.exact.sub(Pop_Winner_Int, Pop_Vote_Delta_Int)

MERGE (ew)-[:DEFEATED]->(el);