… or domain controllers, or kerberos, or …

Back when I managed the Active Directory environment, I’d have developers ask where they should direct traffic. Now, since it was all LDAP, I did the right thing and got a load balanced virtual IP built out so they had “ad.example.com” on port 636 & all of my DCs had SAN’s on their SSL certs so ad.example.com was valid. But! It’s not always that easy — especially if you are looking for something like the global catalog servers. Or no such VIP exists. Luckily, the fact people are logging into the domain tells you that you can ask the internal DNS servers to give you all of this good info. Because domain controllers register service records in DNS.

These are the registrations for all servers regardless of associated site.

_ldap: The LDAP service record is used for locating domain controllers that provide directory services over LDAP.

_ldap._tcp.example.com

_kerberos: This record is used for locating domain controllers that provide Kerberos authentication services.

_kerberos._tcp.example.com

_kpasswd: The kpasswd service record is used for finding domain controllers that can handle Kerberos principal password changes.

_kpasswd._tcp.example.com

_gc: The Global Catalog service record is used for locating domain controllers that have the Global Catalog role.

_gc._tcp._tcp.example.com

_ldap._tcp.pdc: If you need to identify which domain controller holds the PDC emulator operations master role.

_ldap._tcp.pdc._msdcs.example.com

You can also find global catalog, Kerberos, and LDAP service records registered in individual sites. For all servers specific to a site, you need to use _tcp.SiteName._sites.example.com instead.

Example — return all GC’s specific to the site named “SiteXYZ”:

dig _gc._tcp.SiteXYZ._sites.example.com SRV

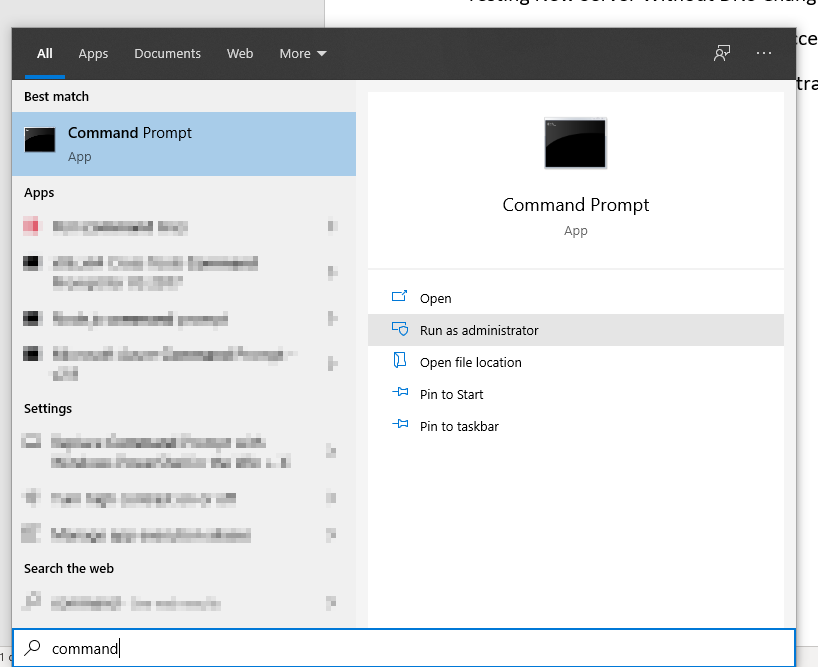

If you are using Windows, first enter “set type=SRV” to return service records and then query for the specific service record you want to view:

C:\Users\lisa>nslookup

Default Server: dns123.example.com

Address: 10.237.123.123

> set type=SRV

> _gc._tcp.SiteXYZ._sites.example.com

Server: dns123.example.com

Address: 10.23.123.123

_gc._tcp.SiteXYZ._sites.example.com SRV service location:

priority = 0

weight = 100

port = 3268

svr hostname = addc032.example.com